What's New

[2025.11] Check out our newly released Awesome-Spatial-VLMs github repo, a community-maintained companion resource to our survey paper: Spatial Intelligence in Vision-Language Models: A Comprehensive Survey ![2025.11] 1 papers accepted by AAAI 2026!

[2025.09] We have 3 papers accepted by NeurIPS 2025!

[2025.08] 3 papers accepted by EMNLP 2025 (1 main track + 2 findings).

[2025.05] 1 paper accepted by ACL 2025.

[2025.05] We will organize The 12th IEEE International Workshop on Analysis and Modeling of Faces and Gestures (AMFG) at ICCV 2025.

[2025.02] Our paper "Blur-Aware Reconstruction of Dynamic Scenes via Gaussian Splatting" is accepted by CVPR 2025. Congratulations to Yiren!

[2024.12] 1 paper accepted by AAAI 2025.

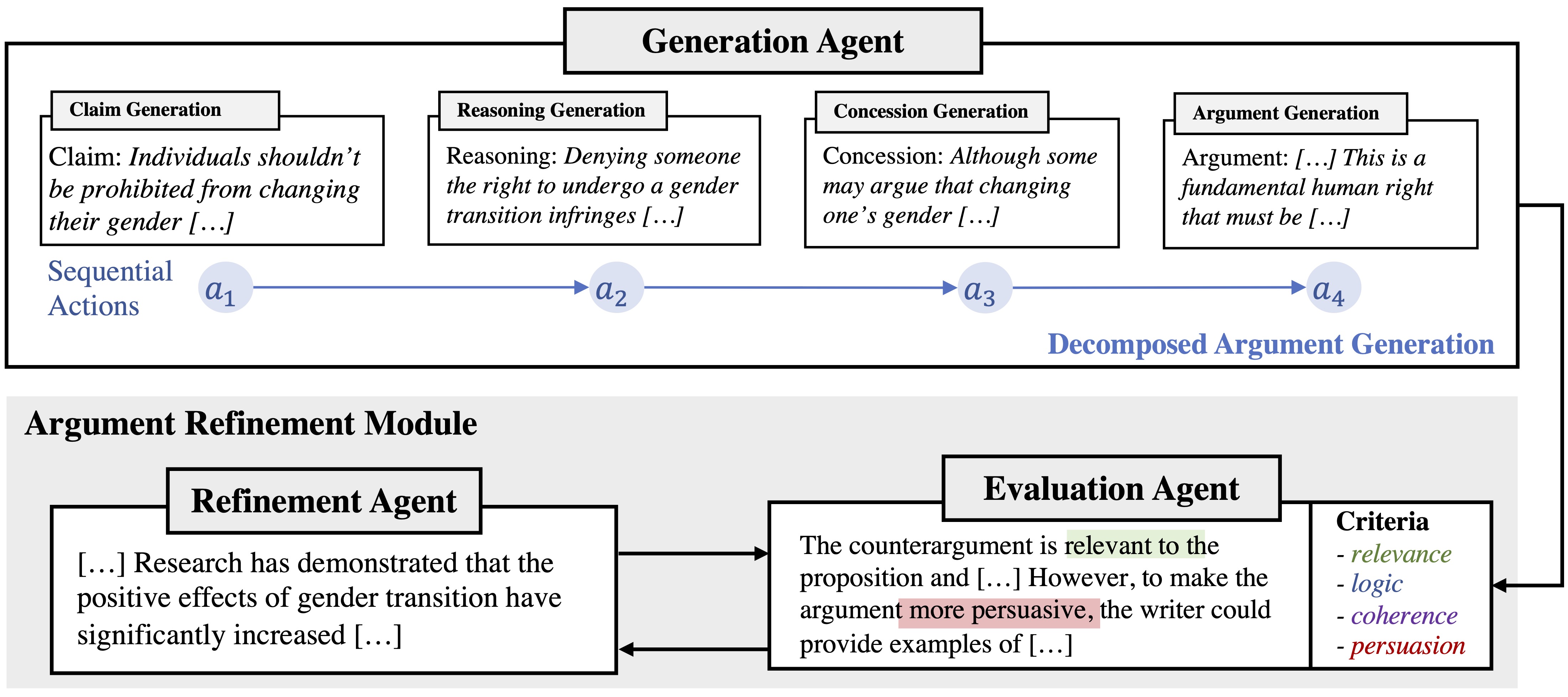

[2024.11] Our paper on multi-agent argument generation has been accepted by COLING 2025.

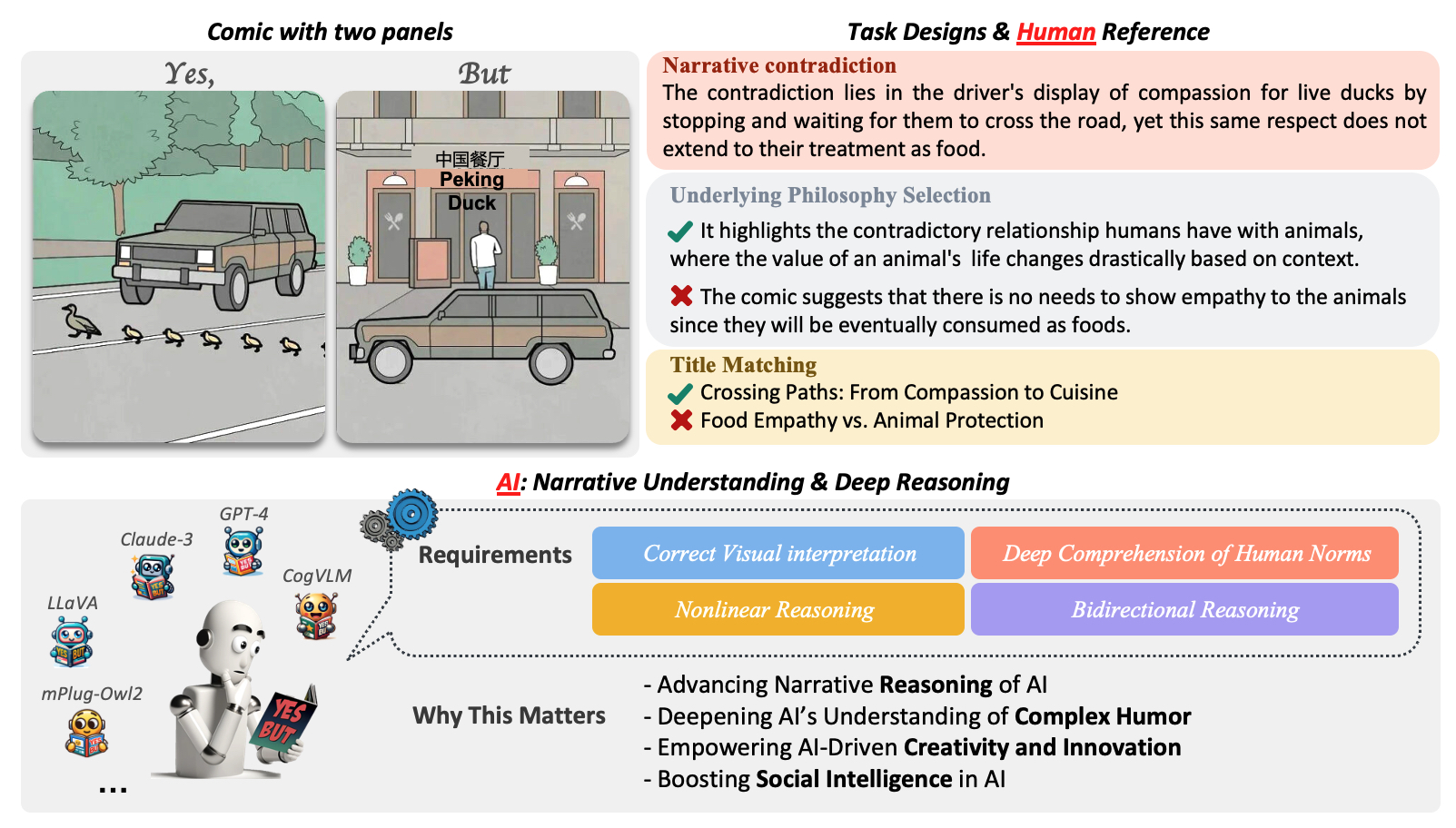

[2024.09] Our paper Cracking the Code of Juxtaposition has been accepted by NeurIPS 2024 as an Oral!

[2024.09] We have 1 paper accepted by EMNLP 2024.

[2024.08] I recieved the Teaching Award from the Department of Computer and Data Sciences at CWRU.

[2024.07] One paper on 3D scene editing has been accepted by ACM MM 2024.

[2024.07] Our paper on argument generation has been accepted by INLG 2024 (Oral).

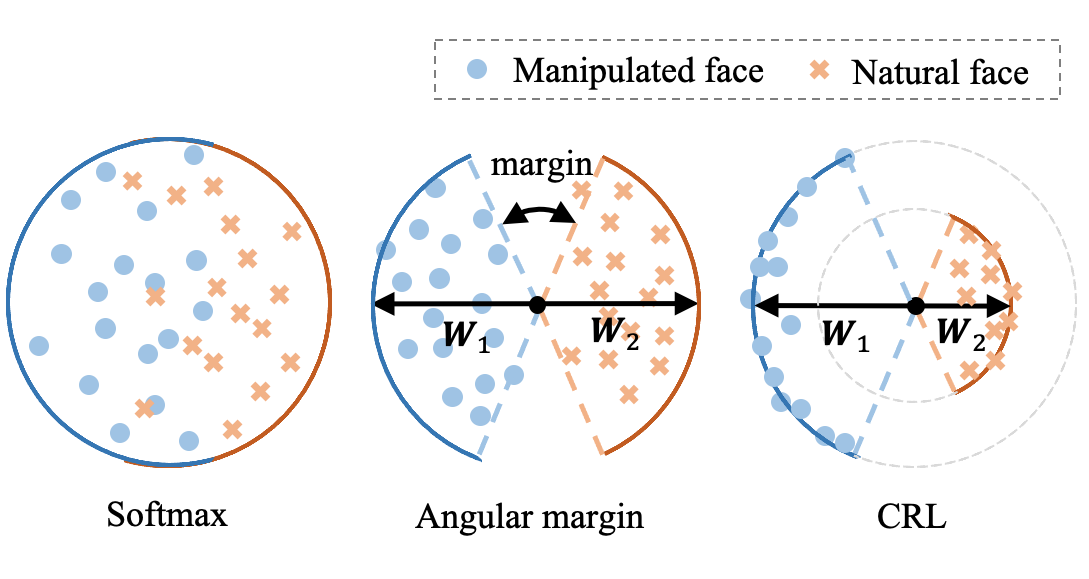

[2023.09] Our paper on deepfake detection has been accepted by ICDM 2023.

[2023.05] I will be joining the Department of Computer and Data Science at Case Western Reserve University (CWRU) as a Tenure-Track Assistant Professor from Fall 2023!

[2023.04] We will organize The 11th IEEE International Workshop on Analysis and Modeling of Faces and Gestures (AMFG) at ICCV 2023.

[2023.04] I received the Dissertation Completion Fellowship from Northeastern University.

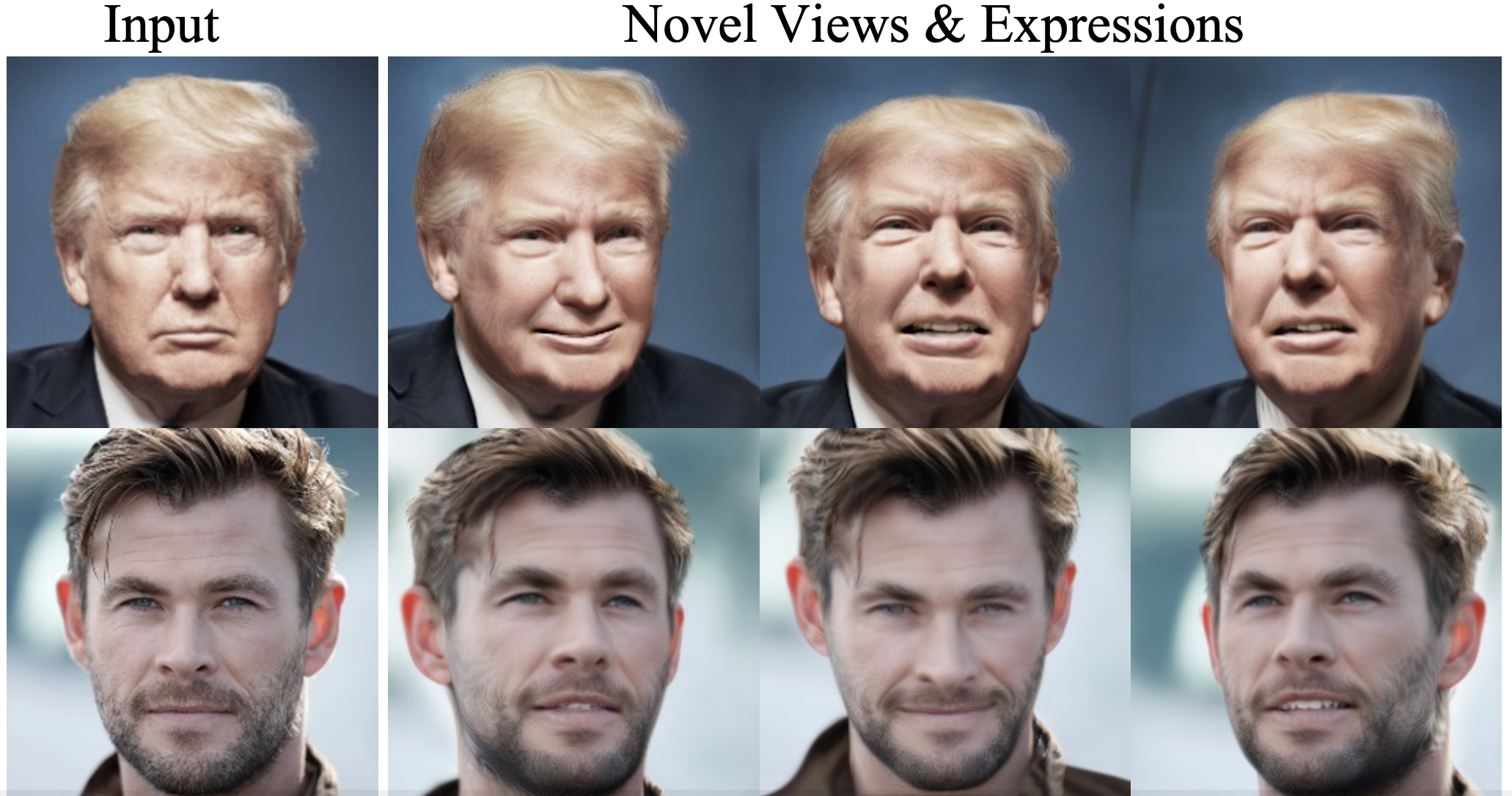

[2023.02] One paper on 3D face animation ("NeRFInvertor") has been accepted by CVPR 2023.

[...] More

Openings: I am continuously looking for highly-motivated Master students and Interns to work on computer vision and machine learning (e.g., 3D vision, VLM, VLA, generative models). Please send me your CV if interested.

Biography

I am a tenure-track assistant professor in the Department of Computer & Data Science at Case Western Reserve University. Before that I received my PH.D. degree in Computer Engineering from Northeastern University in Aug. 2023. During this time, I was under the guidance and supervision of Prof. Yun (Raymond) Fu in the SMILE Lab. I received my master degree in Electrical & Computer Engineering from Northeastern University in Dec. 2018, and B.E degree from School of Electronic Engineering, Wuhan University of Technology, China, in Jul. 2016. From Fall 2016, I was a member of the Augmented Cogniton (AClab), under the supervision of Prof. Sarah Ostadabbas. My research interest broadly includes visual synthesis and understanding, multi-modality fusion, and transfer learning. My recent research topics lie in unified representation learning, 3d modeling and animation, and a wide range of computer vision applications, including image synthesis, 3D novel-view synthesis, face restoration, forensics detection, 3D model generation and rendering, etc.

Education

- 01/2019 - 08/2023: Ph.D. in Computer Engineering, Northeastern University, Boston, USA

- 09/2016 - 12/2018: M.S. in Electrical and Computer Engineering, Northeastern University, Boston, USA

- 09/2012 - 07/2016: B.E. in Electrical and Information Engineering, Wuhan University of Technology, Wuhan, China

Teaching

- Deep Generative Models course (CSDS 570), Case Western Reserve University, USA, 2025 Spring

- Computer Vision course (CSDS 465), Case Western Reserve University, USA, 2024 Spring & Fall, 2025 Fall

- Special Topics on Generative Models course (CSDS 600), Case Western Reserve University, USA, 2023 Fall

- Data Visualization course (EECE 5642), Northeastern University, USA, 2021 Spring

Patents

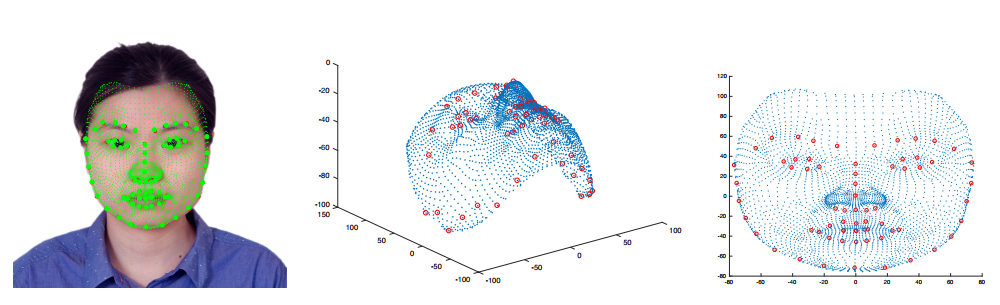

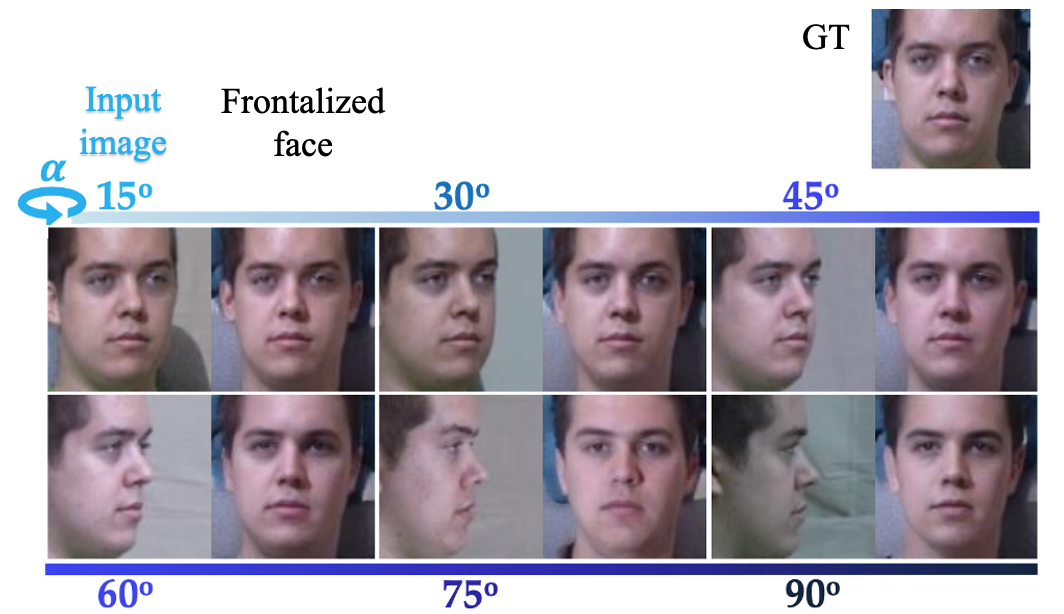

- Yun Fu, Yu Yin, “Frontal Face Synthesis from Low-Resolution Images.” U.S. Patent Application No. 17/156,204.

- Yu Yin, Will A. Hutchcroft, Ivaylo Boyadzhiev, Sing Bing Kang, Yujie Li, Pierre Moulon, “Automated Identification And Use Of Building Floor Plan Information.” U.S. Patent Application No. 17/472,527.

Awards

- Teaching Award, Department of Computer and Data Sciences, Case Western Reserve University, USA, 2024

- PhD Spotlight, Northeastern University, USA, 2023

- Women Who Empower Innovator Awards semi-finalists, Northeastern University, USA, 2023

- Dissertation Fellowship, Northeastern University, USA, 2023

- NSF I-Corps Grant, 2022

- PhD Network Grant, Northeastern University, USA, 2019, 2023

- Conference Travel Grant: AAAI'20, SIG MM'22, CVPR'23

- Excellent Bachelor Thesis of Wuhan University of Technology, 2016

- Scholarship of Wuhan University of Technology, 2013, 2014, 2015

Activities

- Journal Reviewer

- IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI)

- IEEE Transactions on Image Processing (TIP)

- IEEE Transactions on Neural Networks and Learning Systems (TNNLS)

- IEEE Transactions on Cybernetics (TCyber)

- IEEE Transactions on Circuits and Systems for Video Technology (TCSVT)

- IEEE Internet of Things (IoT)

- Information Sciences (Elsevier)

- Program Committee Member

- CVPR (2020-2025)

- ECCV / ICCV (2020-2025)

- NeurIPS (2024-2025)

- ICLR (2024-2025)

- ICML (2024-2025)

- ACL Rolling Review (ARR) (2024-2025)

- IJCAI (2019-2025)

- AAAI (2021-2026)

- ACM MM (2023-2025)

Publications

|

Zhe Hu*, Tuo Liang*, Jing Li, Yiren Lu, Yunlai Zhou, Yiran Qiao, Jing Ma, and Yu Yin (*co-first author) Conference on Neural Information Processing Systems (NeurIPS), 2024 [Oral] Abstract Paper arXiv Webpage Code Dataset |

|

Zhe Hu, Yixiao Ren, Jing Li, and Yu Yin Conference on Empirical Methods in Natural Language Processing (EMNLP), 2024 Abstract Paper arXiv Webpage Code Dataset |

|

|

Source Edited

=>

|

Yiren Lu, Jing Ma, and Yu Yin ACM International Conference on Multimedia (ACM MM), 2024 Abstract Paper arXiv Webpage |

|

Zhe Hu, Hou Pong Chan, and Yu Yin ACL International Natural Language Generation Conference (INLG), 2024 [Oral] Abstract Paper arXiv Slides |

|

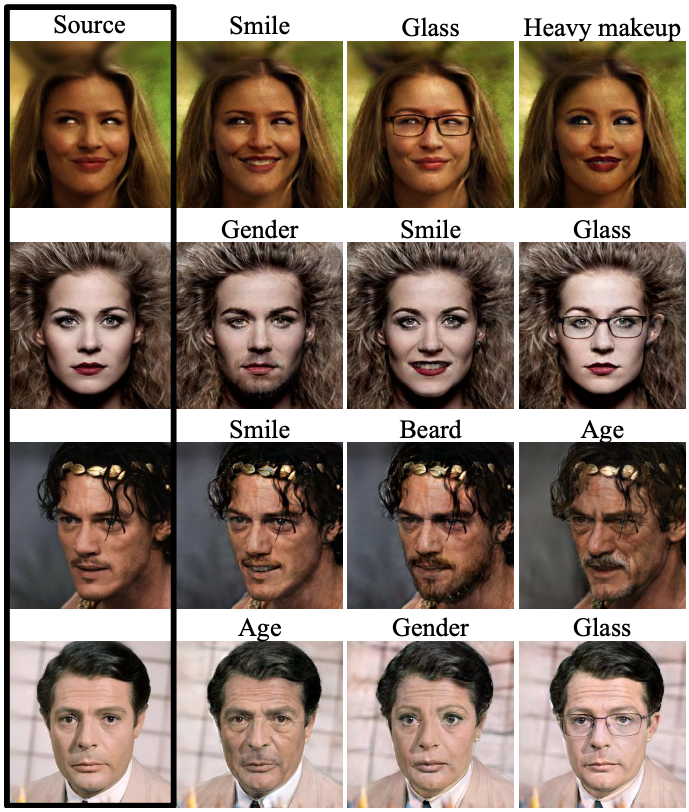

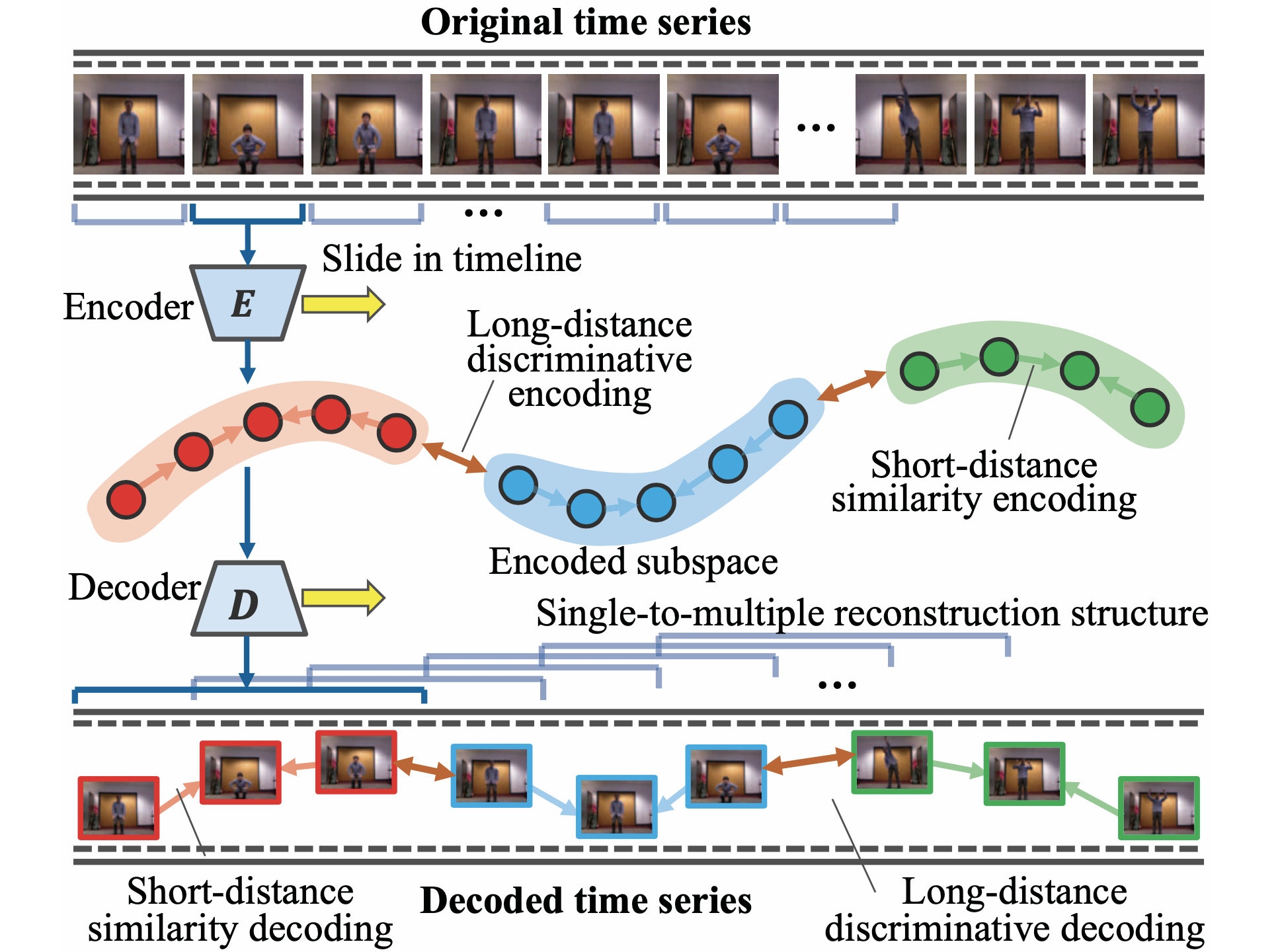

Yu Yin, Yue Bai, Yizhou Wang, and Yun Fu IEEE International Conference on Data Mining (ICDM), 2023 Abstract Paper |

|

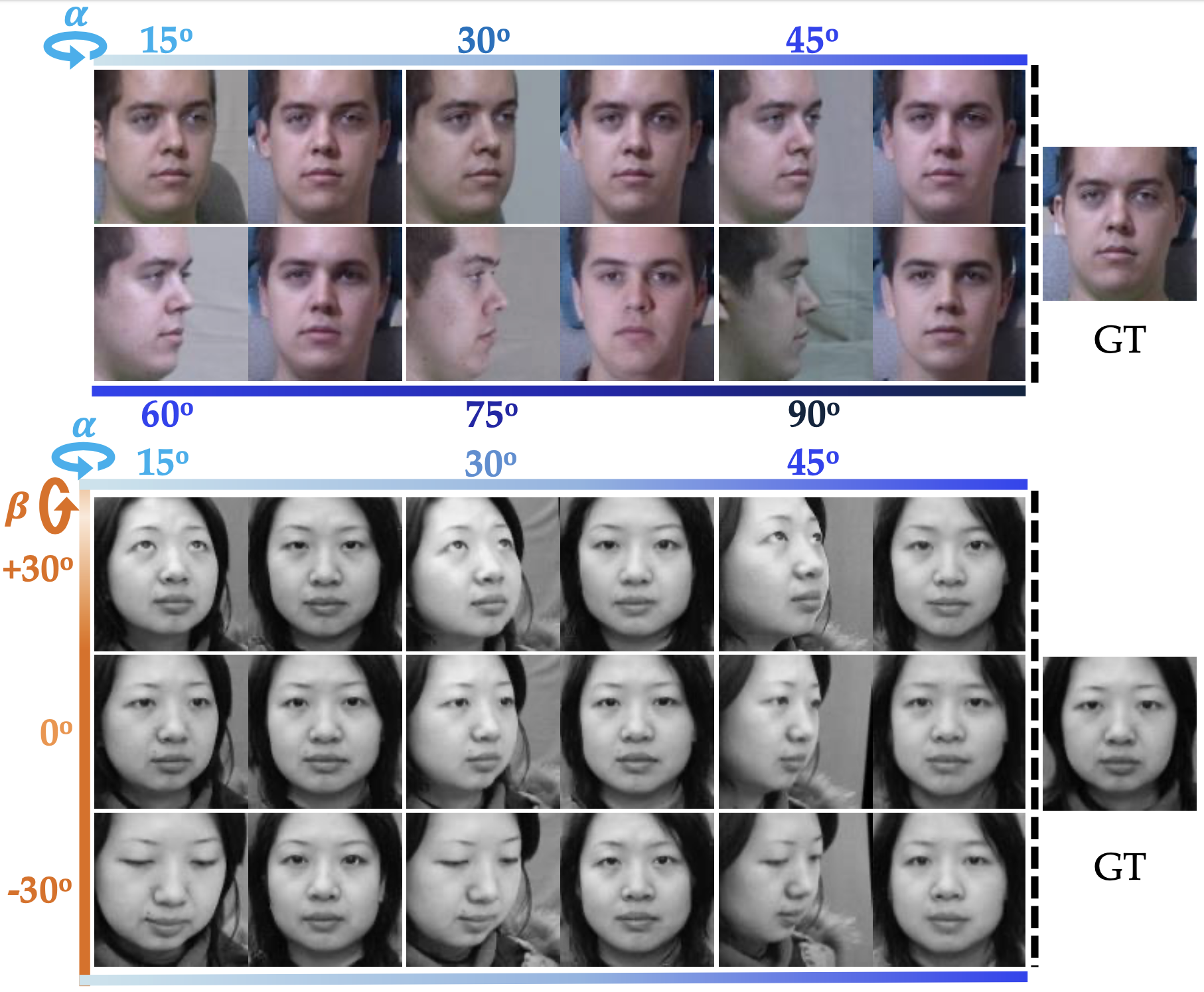

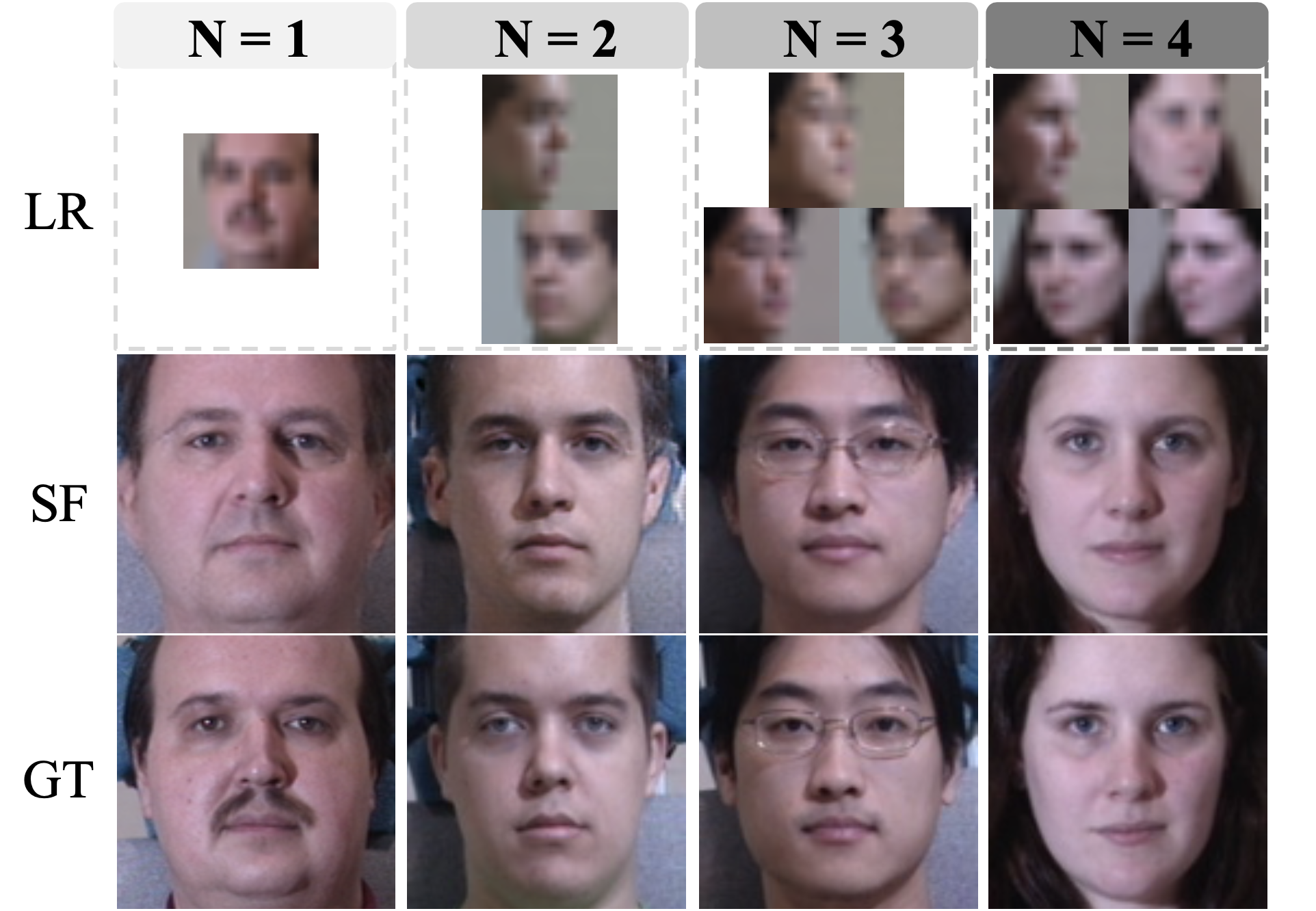

Yu Yin, Kamran Ghasedi, HsiangTao Wu, Jiaolong Yang, Xin Tong, and Yun Fu IEEE/CVF Computer Vision and Pattern Recognition Conference (CVPR), 2023 Abstract Paper arXiv Webpage Demo Code |

|

Yu Yin, Kamran Ghasedi, HsiangTao Wu, Jiaolong Yang, Xin Tong, and Yun Fu Under review, 2022 Abstract arXiv |

|

Yu Yin, Joseph P. Robinson, and Yun Fu ACM International Conference on Multimedia (ACM MM), 2022 Abstract Paper |

|

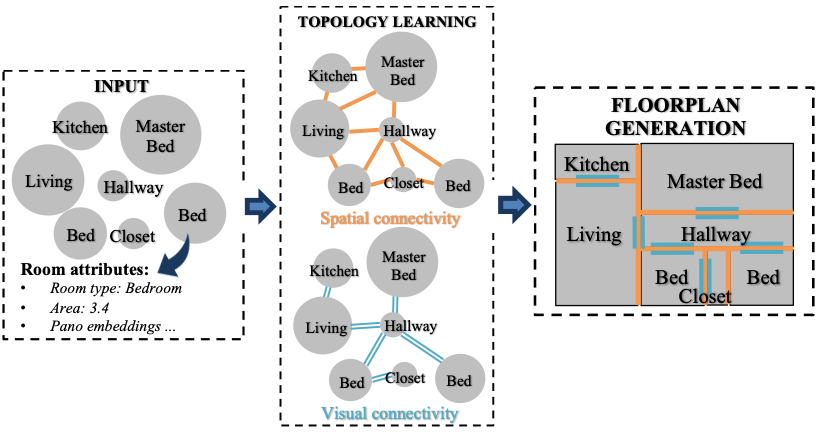

Yu Yin, Will Hutchcroft, Naji Khosravan, Ivaylo Boyadzhiev, Yun Fu, and Sing Bing Kang ACM International Conference on Multimedia Retrieval (ACM ICMR), 2022 Abstract Paper Presentation |

|

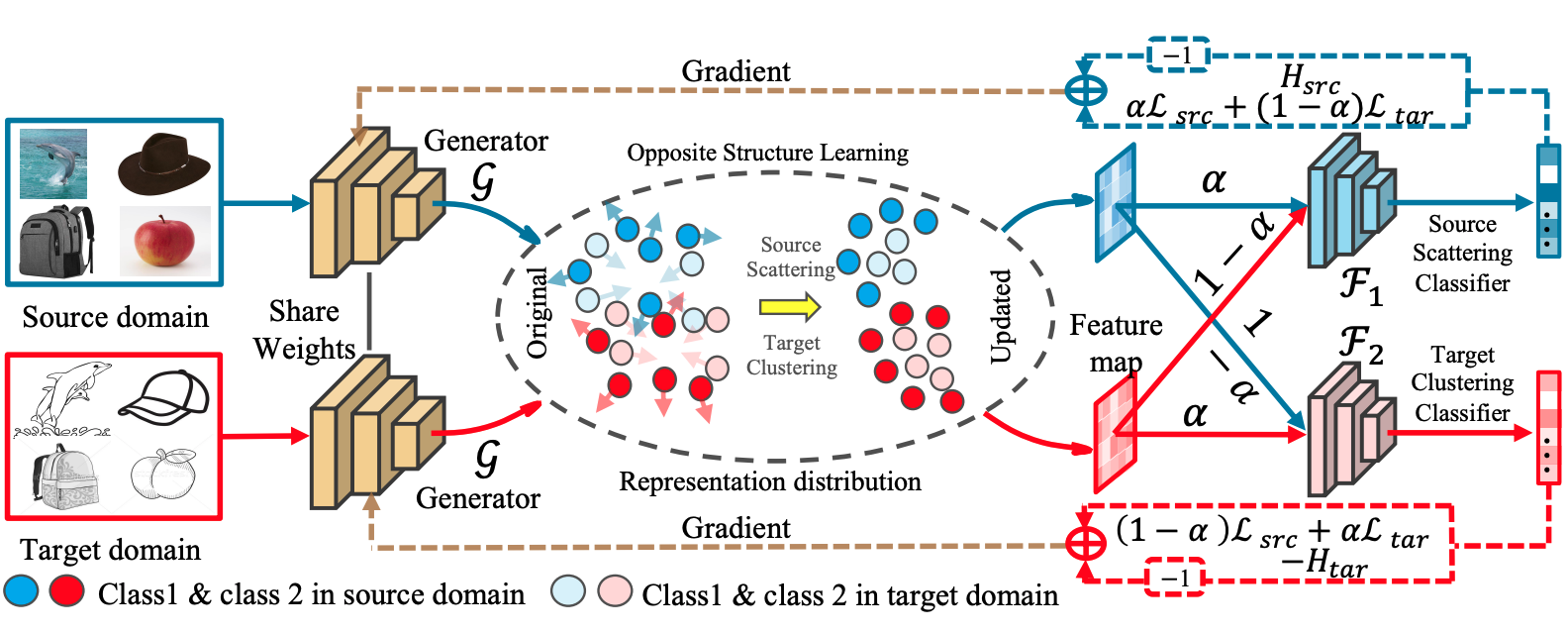

Can Qin, Lichen Wang, Qianqian Ma, Yu Yin, Huan Wang, and Yun Fu IEEE Transactions on Image Processing (TIP), 2022 Abstract Paper Code |

|

Yue Bai, Lichen Wang, Yunyu Liu, Yu Yin, Hang Di, and Yun Fu IEEE Transactions on Image Processing (TIP), 2022 Abstract Paper |

|

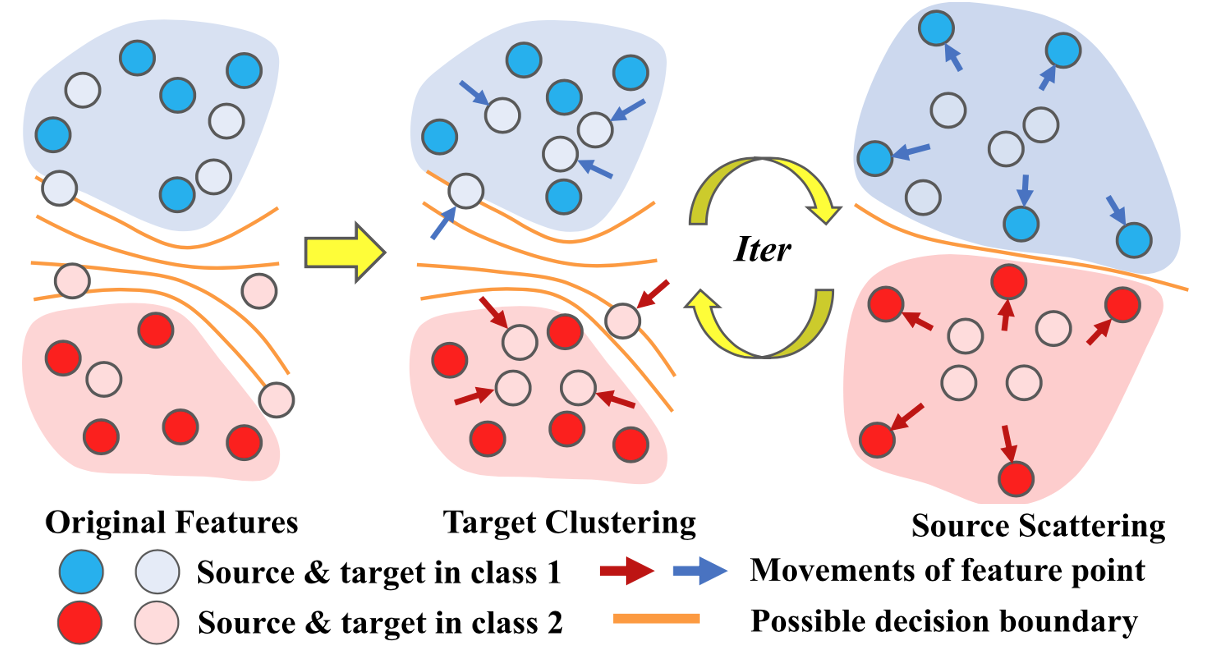

Yue Bai, Zhiqiang Tao, Lichen Wang, Sheng Li, Yu Yin, and Yun Fu SIAM International Conference on Data Mining (SDM), 2022 Abstract Paper |

|

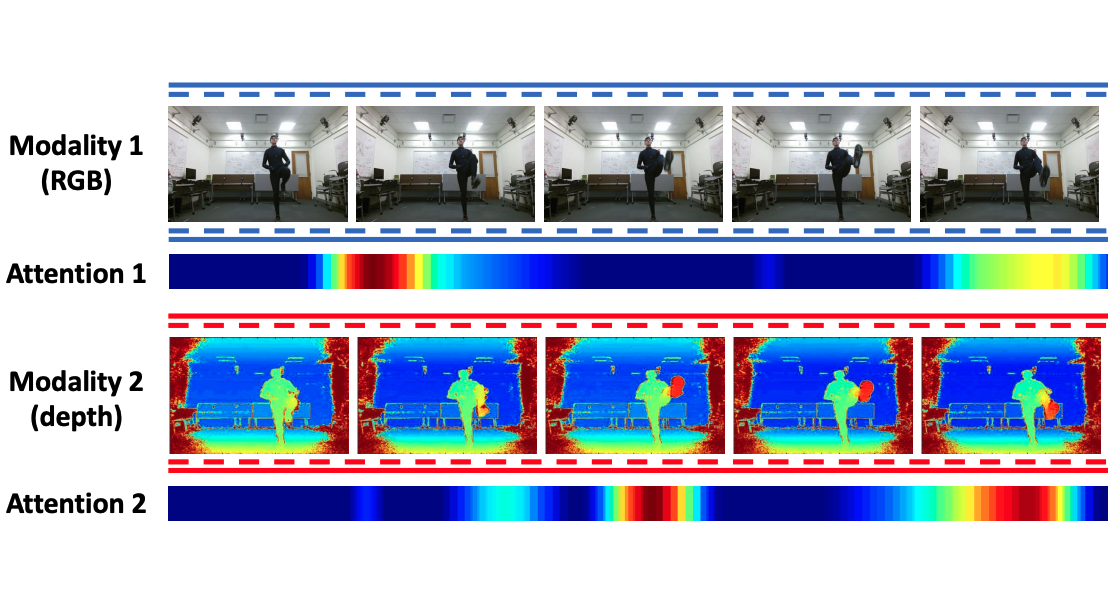

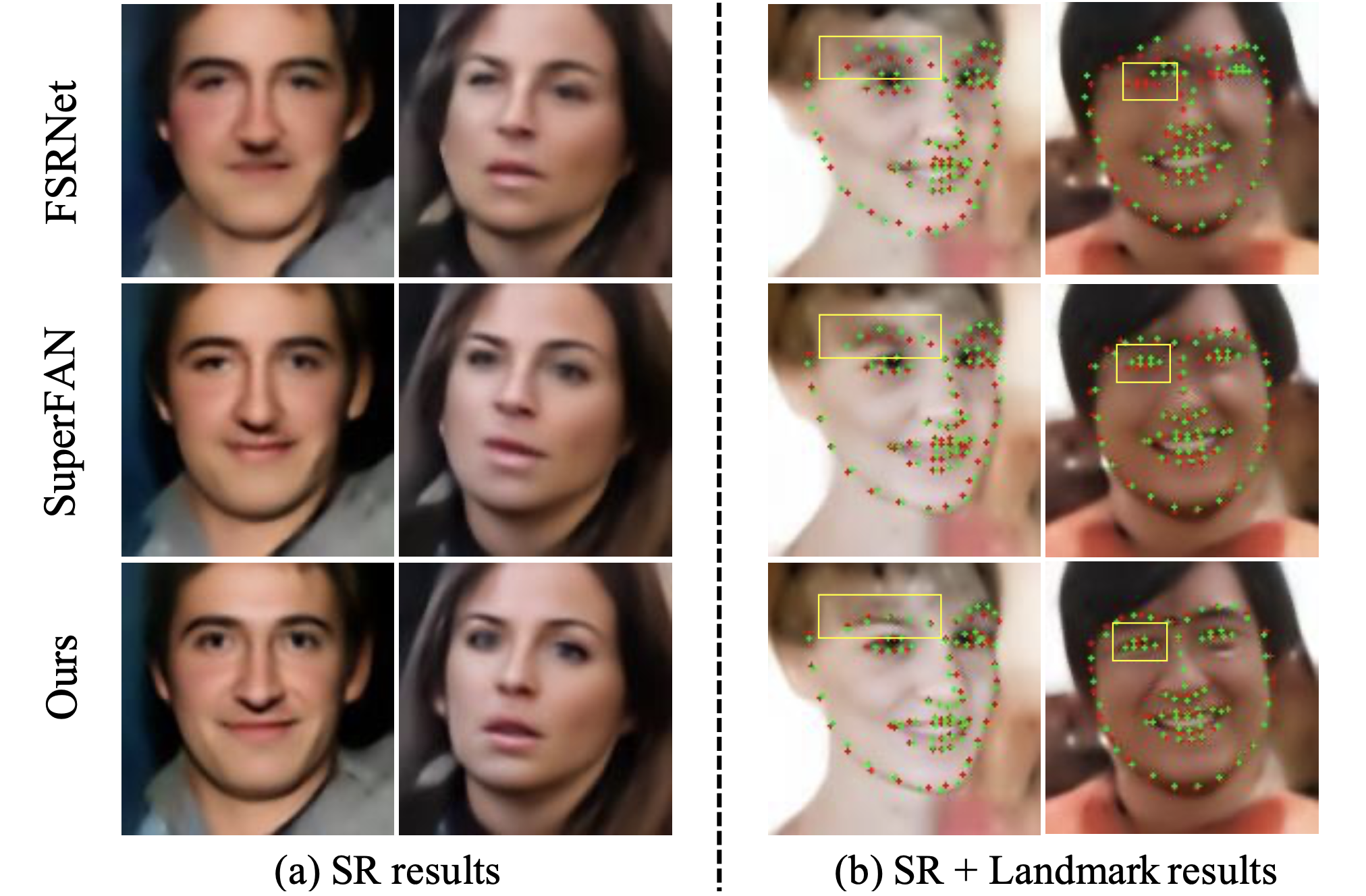

Yu Yin, Joseph P. Robinson, Songyao Jiang, Yue Bai, Can Qin, and Yun Fu ACM International Conference on Multimedia (ACM MM), 2021 Abstract Paper Code Supplements |

|

Can Qin, Lichen Wang, Qianqian Ma, Yu Yin, Huan Wang, and Yun Fu SIAM International Conference on Data Mining (SDM), 2021 Abstract Paper Code |

|

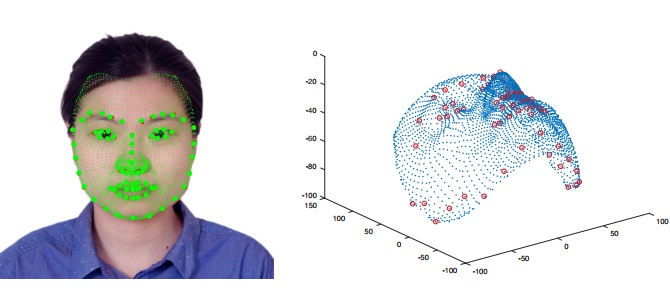

Yu Yin, Songyao Jiang, Joseph P. Robinson, and Yun Fu IEEE International Conference on Automatic Face and Gesture Recognition (FG), 2020 Abstract Paper Paper Code Presentation |

|

Yu Yin, Joseph P. Robinson, Yulun Zhang, and Yun Fu AAAI Conference on Artificial Intelligence (AAAI), 2020 Abstract Paper Code |

|

Yue Bai, Lichen Wang, Yunyu Liu, Yu Yin, and Yun Fu IEEE International Conference on Data Mining (ICDM), 2020 Abstract Paper |

|

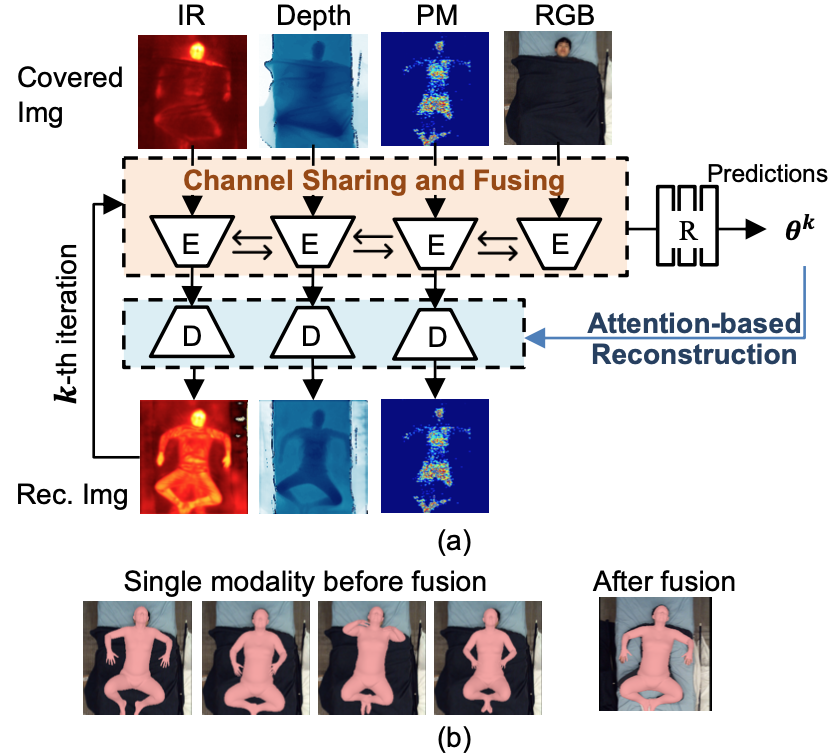

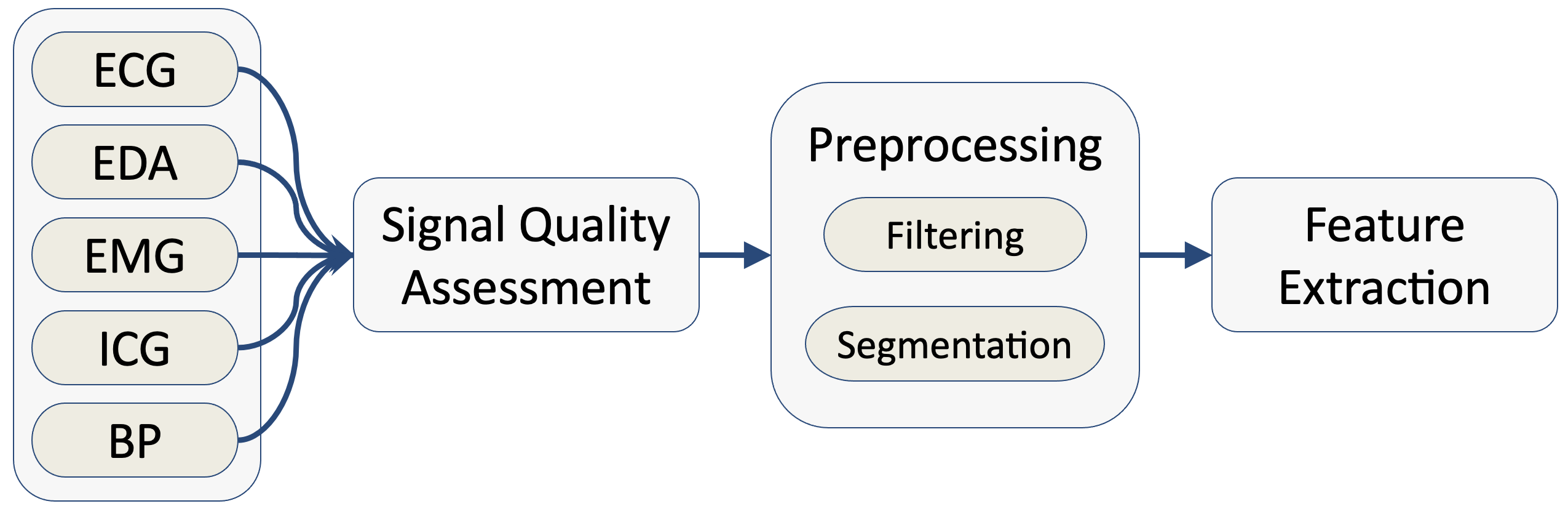

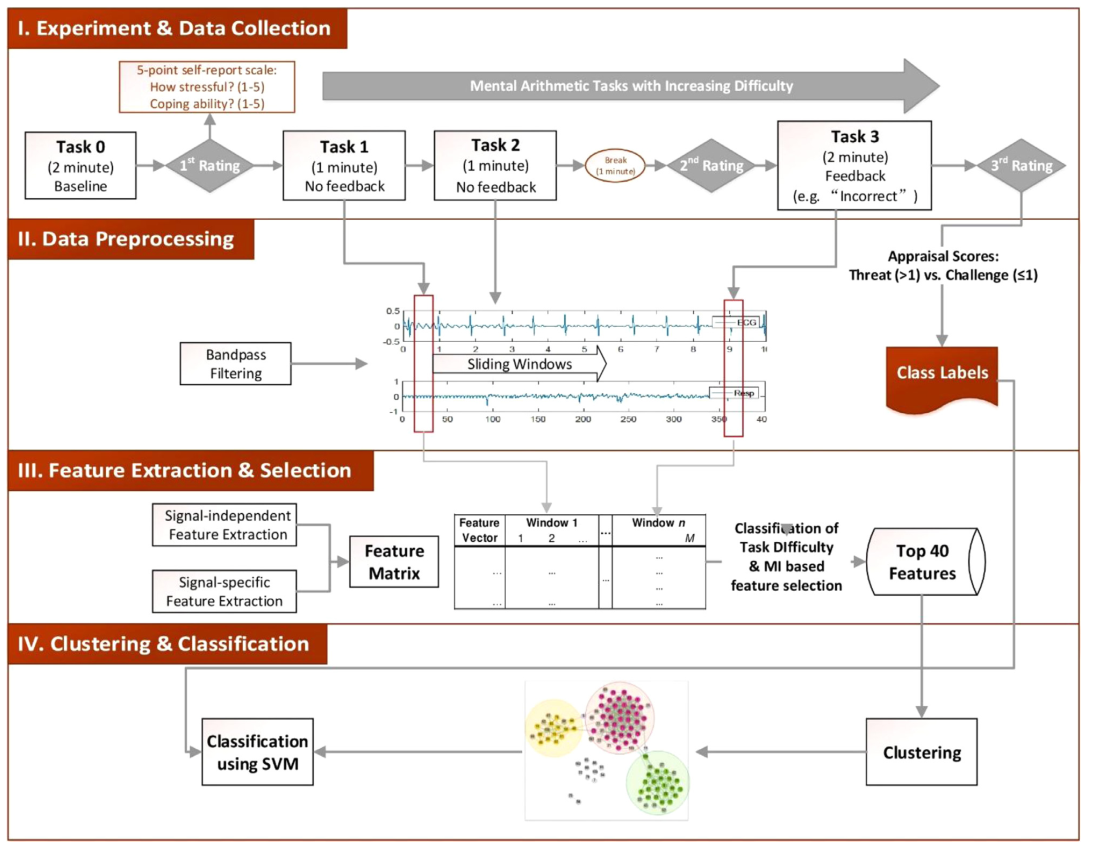

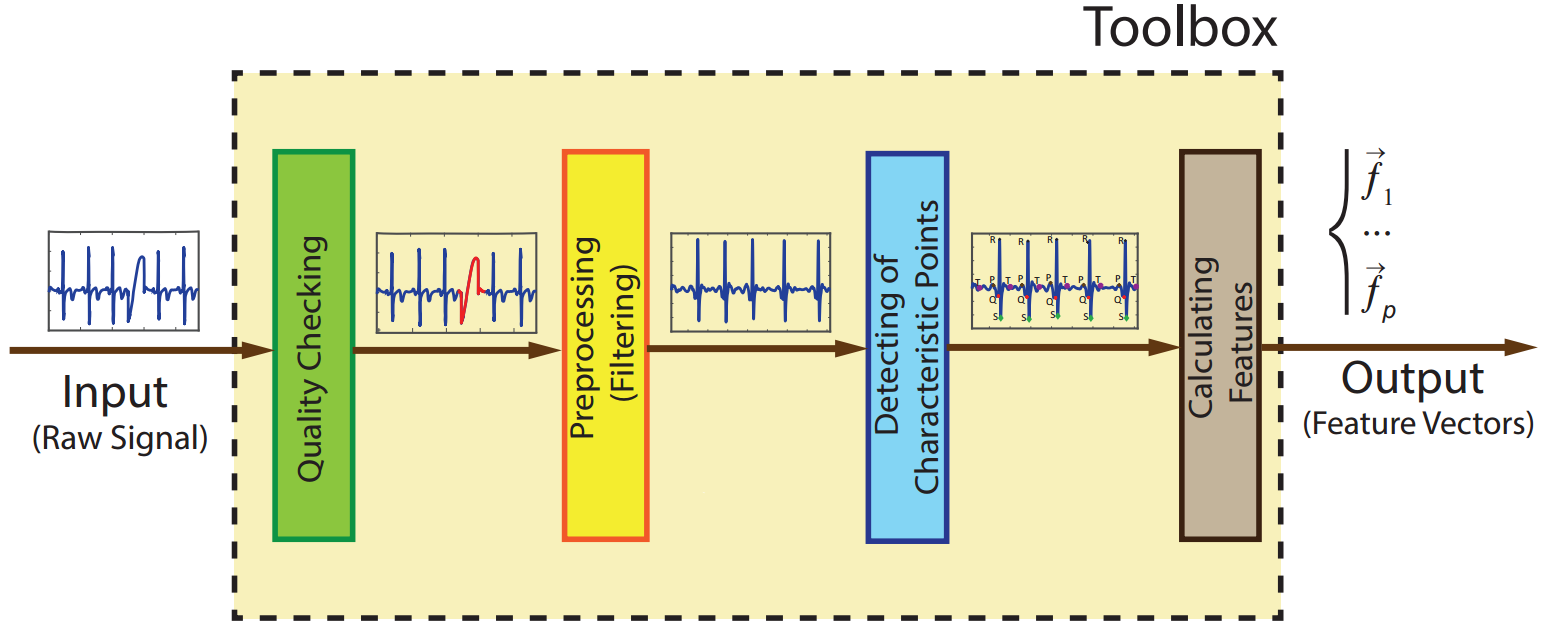

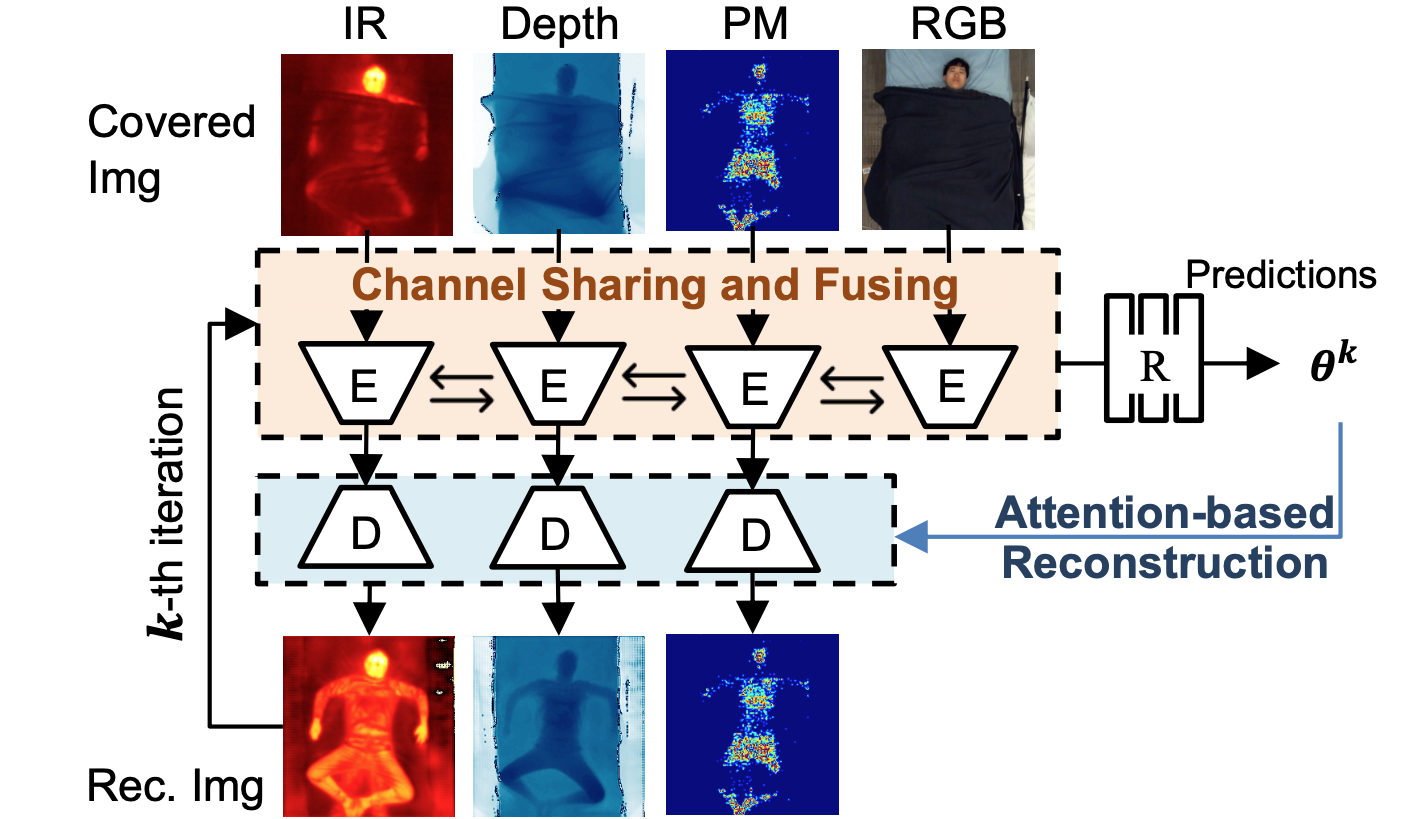

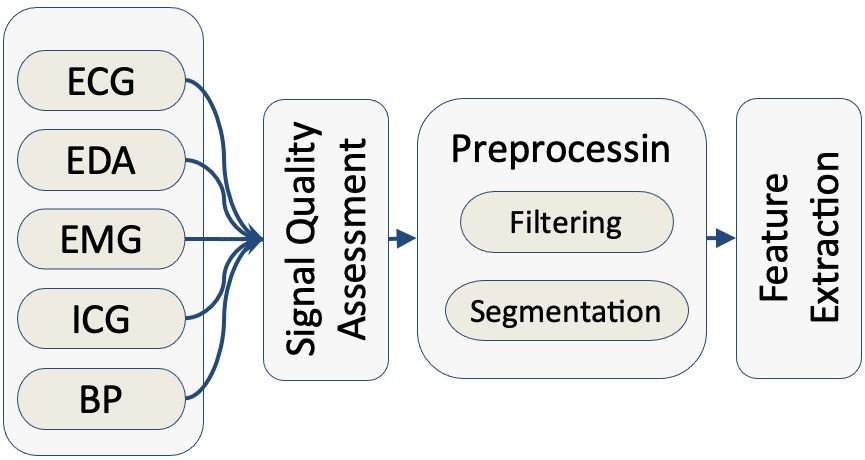

Yu Yin, Mohsen Nabian, Miolin Fan, ChunAn Chou, Maria Gendron, and Sarah Ostadabbas Affective Computing Workshop of the International Joint Conferences on Artificial Intelligence (IJCAI Workshop), 2018 Abstract arXiv Code Demo Slides |

|

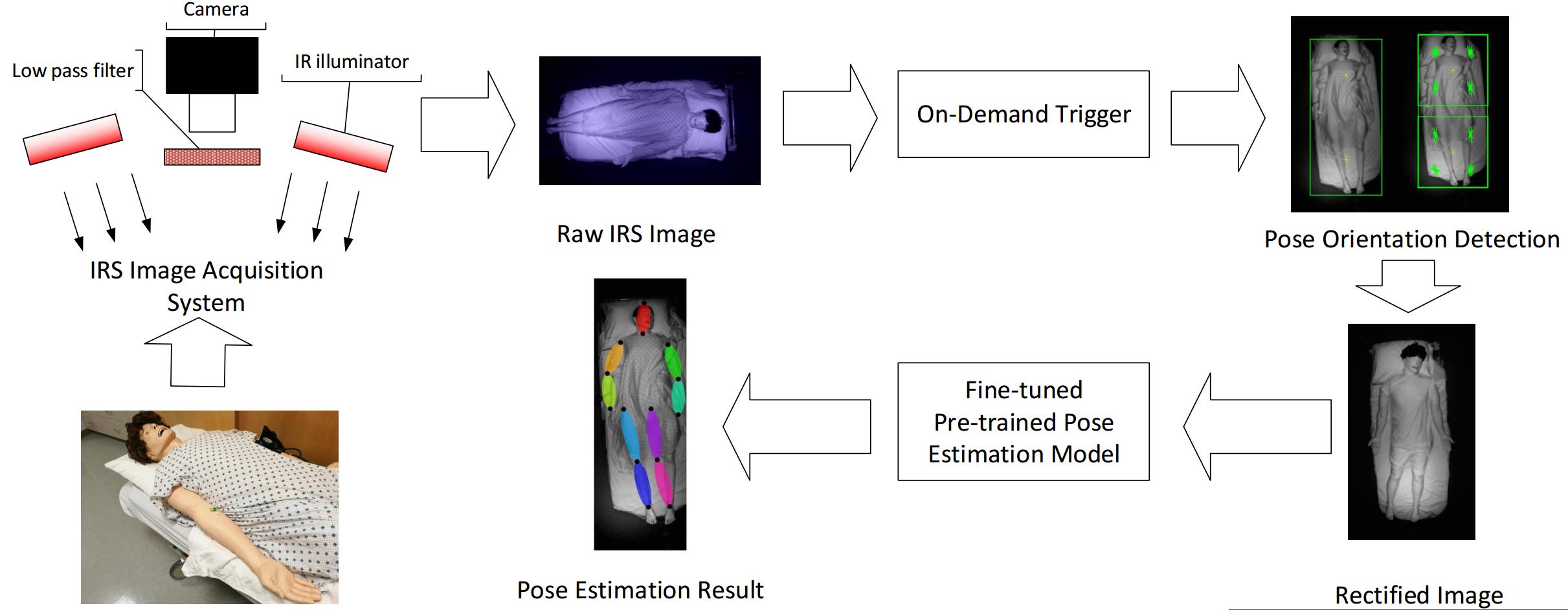

Yu Yin*, Mohsen Nabian*, Athena Nouhi*, and Sarah Ostadabbas (* equal contribution) IEEE-NIH Special Topics Conference on Healthcare Innovations and Point-of-Care Technologies (HI-POCT), 2017 Abstract PDF Matlab Code Poster |

|

Shuangjun Liu, Yu Yin, and Sarah Ostadabbas IEEE Journal of Translational Engineering in Health and Medicine (JTEHM), 2019 Abstract arXiv Code Project |

- Analysis of Multimodal Physiological Signals Within and Across Individuals to Predict Psychological Threat vs. Challenge Aya Khalaf, Mohsen Nabian, Miaolin Fan, Yu Yin, Jolie Wormwood, Erika Siegel, Karen S. Quigley, Lisa Feldman Barrett, Murat Akcakaya, Chun-An Chou, and Sarah Ostadabbas Expert Systems With Applications (ESWA), vol. 140, Feb. 2020.

psyarXiv Abstract